sherpa-onnx

An open source project that supports multiple speech recognition and speech synthesis functions

Product Details

sherpa-onnx is a speech recognition and speech synthesis project based on the next generation Kaldi. It uses onnxruntime for inference and supports a variety of speech-related functions, including speech-to-text (ASR), text-to-speech (TTS), speaker recognition, speaker verification, language recognition, keyword detection, etc. It supports multiple platforms and operating systems, including embedded systems, Android, iOS, Raspberry Pi, RISC-V, servers, and more.

Main Features

How to Use

Target Users

sherpa-onnx is suitable for developers and researchers, especially those who need to implement speech recognition and speech synthesis functions on different platforms. It provides a variety of APIs, including C++, C, Python, Go, C#, Java, Kotlin, JavaScript, and Swift, making it easy for developers with different backgrounds to use.

Examples

Real-time speech-to-text on Android devices using sherpa-onnx.

Use sherpa-onnx to perform batch speech recognition tasks on the server.

Using sherpa-onnx for keyword detection in embedded systems.

Quick Access

Visit Website →Categories

Related Recommendations

Discover more similar quality AI tools

Reverb

Reverb is an open source speech recognition and speaker segmentation model inference code that uses the WeNet framework for speech recognition (ASR) and the Pyannote framework for speaker segmentation. It provides detailed model descriptions and allows users to download models from Hugging Face. Reverb aims to provide developers and researchers with high-quality speech recognition and speaker segmentation tools to support a variety of speech processing tasks.

Realtime API

Realtime API is a low-latency voice interaction API launched by OpenAI that allows developers to build fast voice-to-speech experiences in applications. The API supports natural speech-to-speech conversations and handles interruptions, similar to ChatGPT’s advanced speech mode. It connects through WebSocket and supports function calls, allowing the voice assistant to respond to user requests, trigger actions or introduce new context. The launch of this API means that developers no longer need to combine multiple models to build a voice experience, but can achieve a natural conversation experience through a single API call.

Deepgram Voice Agent API

The Deepgram Voice Agent API is a unified speech-to-speech API that allows natural-sounding conversations between humans and machines. The API is powered by industry-leading speech recognition and speech synthesis models to listen, think and speak naturally and in real time. Deepgram is committed to driving the future of voice-first AI through its voice agent API, integrating advanced generative AI technology to create a business world capable of smooth, human-like voice agents.

seed-vc

seed-vc is a sound conversion model based on the SEED-TTS architecture, which can achieve zero-sample sound conversion, that is, the sound can be converted without the need for a specific person's voice sample. This technology performs well in terms of audio quality and timbre similarity, and has high research and application value.

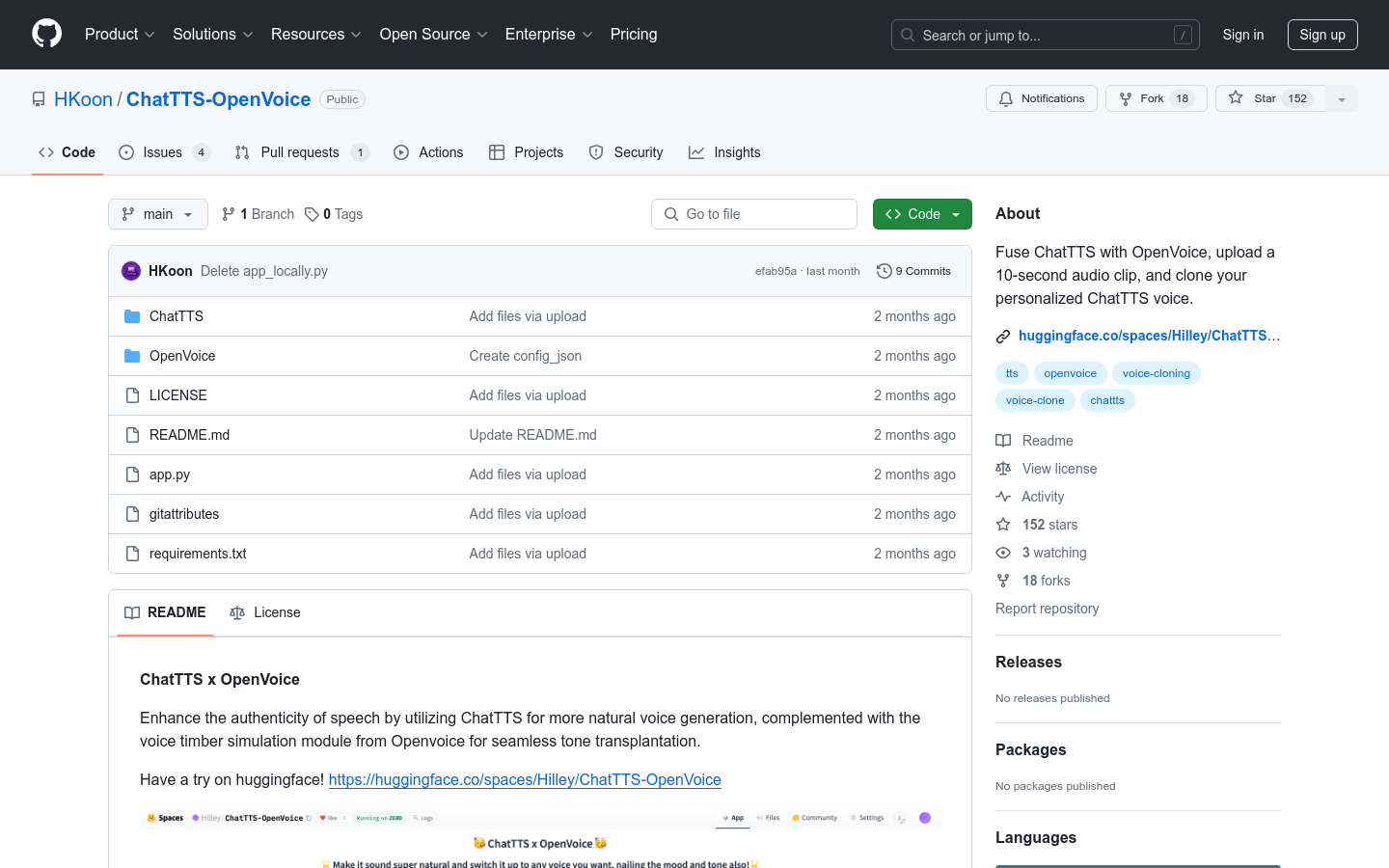

ChatTTS-OpenVoice

ChatTTS-OpenVoice is a voice cloning model that combines ChatTTS and OpenVoice technologies. By uploading a 10-second audio clip, it can clone a personalized voice and generate a more natural voice. This technology is important in the field of speech synthesis because it provides a new way to generate lifelike speech that can be used in a variety of application scenarios such as virtual assistants, audiobooks, etc.

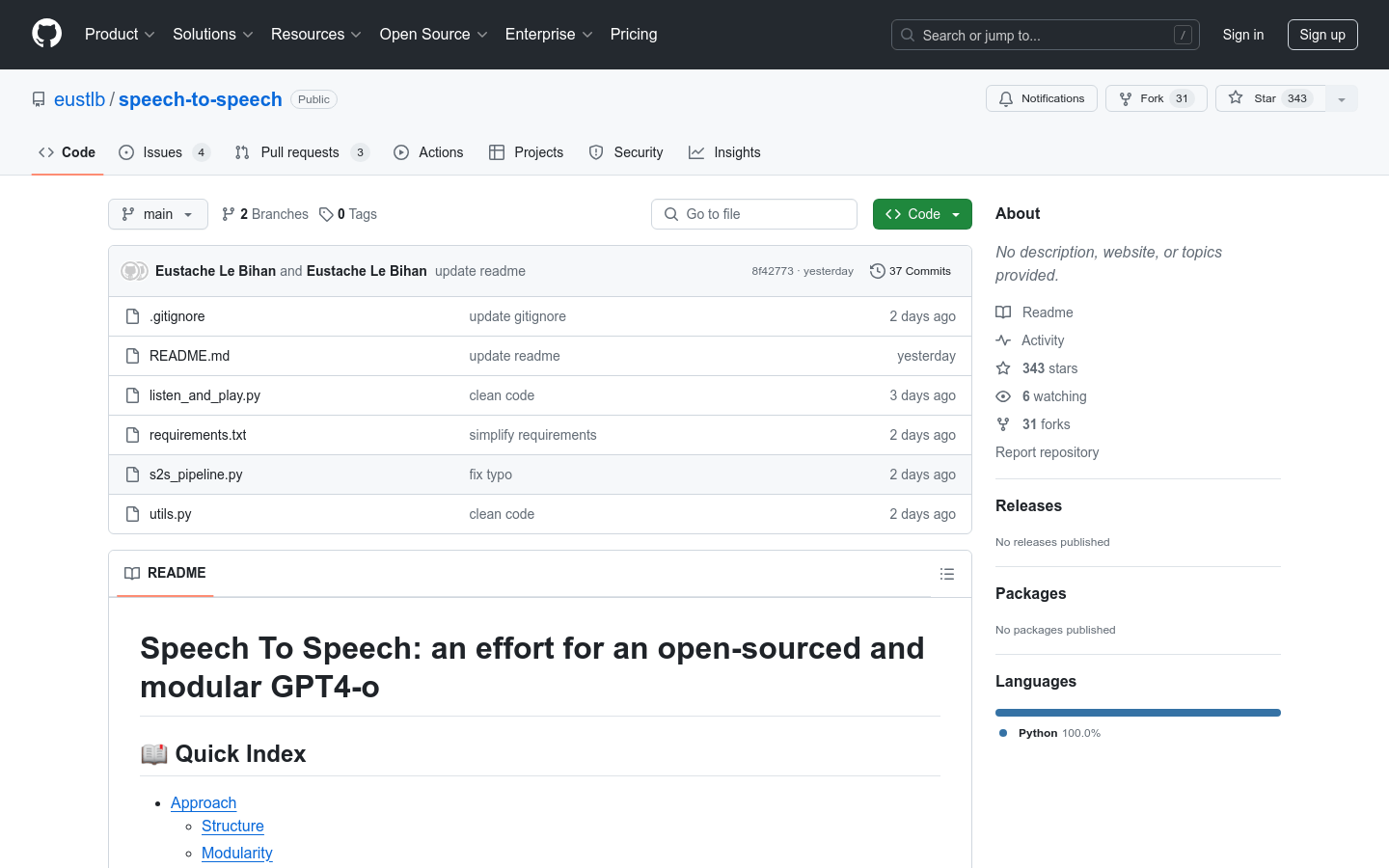

speech-to-speech

speech-to-speech is an open source modular GPT4-o project that implements speech-to-speech conversion through continuous parts such as speech activity detection, speech-to-text, language model, and text-to-speech. It leverages the Transformers library and models available on the Hugging Face hub, providing a high degree of modularity and flexibility.

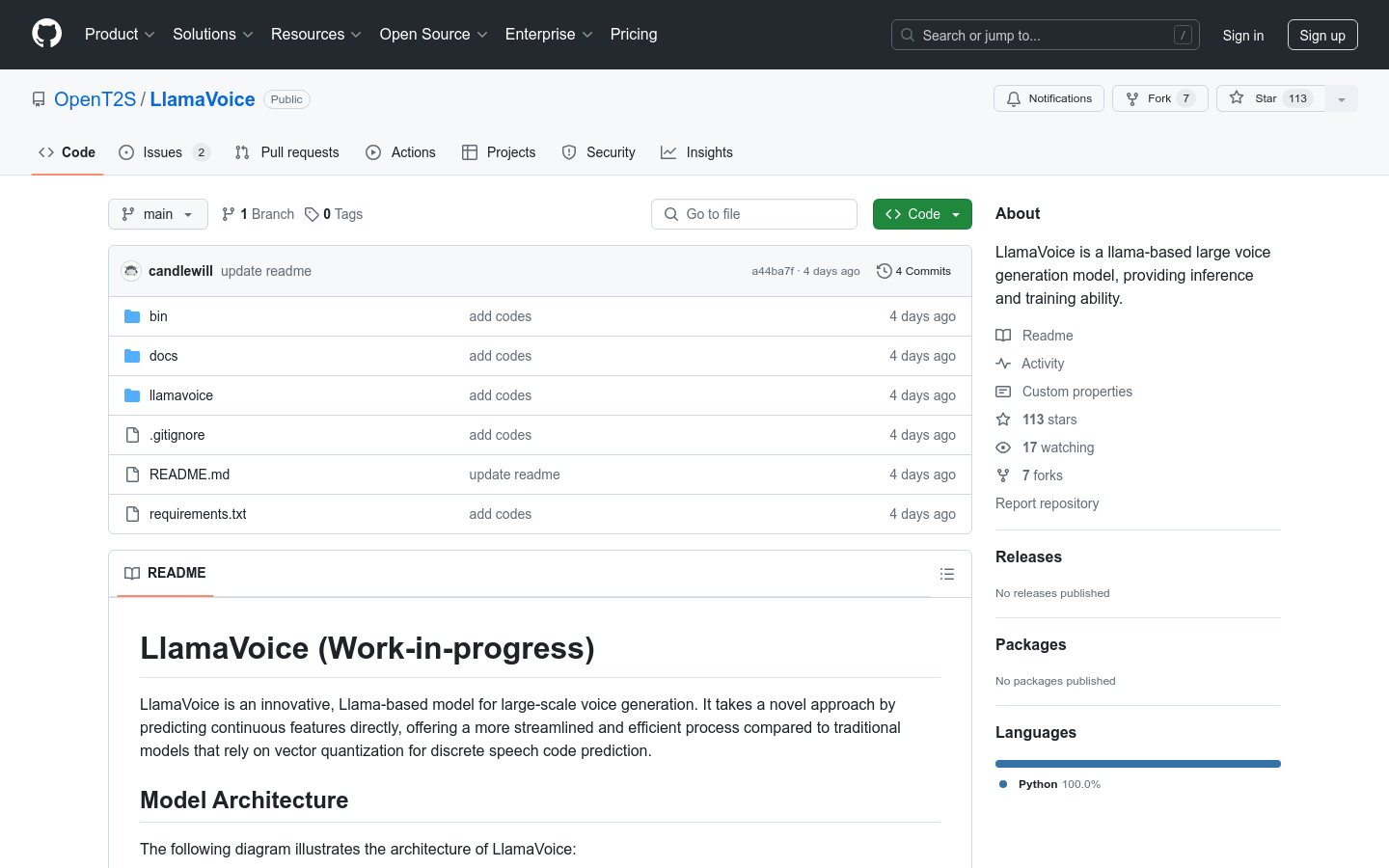

LlamaVoice

LlamaVoice is a large-scale speech generation model based on the alpaca model. By directly predicting continuous features, it provides a smoother and more efficient processing compared to traditional vector quantization models that rely on discrete speech code prediction. The model has key features such as continuous feature prediction, variational autoencoder (VAE) latent feature prediction, joint training, advanced sampling strategies and flow-based enhancement.

ElevenLabs AI audio API

ElevenLabs AI Audio API provides high-quality speech synthesis services, supports multiple languages, and is suitable for chatbots, agents, websites, applications, etc., with low latency and high response speed. The API supports enterprise-level requirements, ensuring data security and compliance with SOC2 and GDPR compliance.

ChatTTS_Speaker

ChatTTS_Speaker is an experimental project based on the ERes2NetV2 speaker recognition model. It aims to score the stability and label the timbre to help users choose a timbre that is stable and meets their needs. The project is open source and supports online listening and downloading of sound samples.

seed-tts-eval

seed-tts-eval is a test set for evaluating the model's zero-shot speech generation capabilities. It provides an objective evaluation test set for cross-domain goals, including samples extracted from English and Mandarin public corpora, to measure the model's performance on various objective indicators. It uses 1000 samples from the Common Voice dataset and 2000 samples from the DiDiSpeech-2 dataset.

ChatTTS-ui

ChatTTS-ui is a web interface and API interface provided for the ChatTTS project, allowing users to perform speech synthesis operations through web pages and make remote calls through the API interface. It supports a variety of timbre options, and users can customize speech synthesis parameters, such as laughter, pauses, etc. This project provides an easy-to-use interface for speech synthesis technology, lowering the technical threshold and making speech synthesis more convenient.

ChatTTS

ChatTTS is an open source text-to-speech (TTS) model that allows users to convert text to speech. This model is intended primarily for academic research and educational purposes and is not intended for commercial or legal use. It uses deep learning technology to generate natural and smooth speech output, and is suitable for those who research and develop speech synthesis technology.

SpeechGPT

SpeechGPT is a multimodal language model with inherent cross-modal dialogue capabilities. It can sense and generate multimodal content and follow multimodal human instructions. SpeechGPT-Gen is a speech generation model that extends the information chain. SpeechAgents is a human communication simulation with a multi-modal multi-agent system. SpeechTokenizer is a unified speech tokenizer for speech language models. Release dates and related information for these models and datasets can be found on the official website.