InternVL2_5-2B

Multi-modal large-scale language model supports deep interaction between images and text

Product Details

InternVL 2.5 is an advanced multi-modal large language model series that builds on InternVL 2.0 by introducing significant training and testing strategy enhancements and data quality improvements while maintaining its core model architecture. The model integrates the newly incrementally pretrained InternViT with various pretrained large language models, such as InternLM 2.5 and Qwen 2.5, using randomly initialized MLP projectors. InternVL 2.5 supports multiple image and video data, with dynamic high-resolution training methods that provide better performance when processing multi-modal data.

Main Features

How to Use

Target Users

The target audience is researchers, developers and enterprises, especially those application scenarios that need to process and understand multi-modal data, such as the combination of images and text. InternVL2_5-2B, with its powerful multi-modal understanding and generation capabilities, is suitable for developing intelligent image-text processing applications, such as image description, visual question answering and multi-modal dialogue systems.

Examples

Use the InternVL2_5-2B model to generate detailed descriptions of product images for e-commerce platforms.

In the field of education, this model is used to provide image-assisted language learning materials to enhance the learning experience.

In the field of security monitoring, video understanding capabilities are used to automatically identify and respond to abnormal behaviors.

Quick Access

Visit Website →Categories

Related Recommendations

Discover more similar quality AI tools

![FLUX.1 Krea [dev]](https://pic.chinaz.com/ai/2025/08/01/25080110475283667344.jpg)

FLUX.1 Krea [dev]

FLUX.1 Krea [dev] is a 12 billion parameter modified stream converter designed for generating high quality images from text descriptions. The model is trained with guided distillation to make it more efficient, and the open weights drive scientific research and artistic creation. The product emphasizes its aesthetic photography capabilities and strong prompt-following capabilities, making it a strong competitor to closed-source alternatives. Users of the model can use it for personal, scientific and commercial purposes, driving innovative workflows.

MuAPI

WAN 2.1 LoRA T2V is a tool that can generate videos based on text prompts. Through customized training of the LoRA module, users can customize the generated videos, which is suitable for brand narratives, fan content and stylized animations. The product background is rich and provides a highly customized video generation experience.

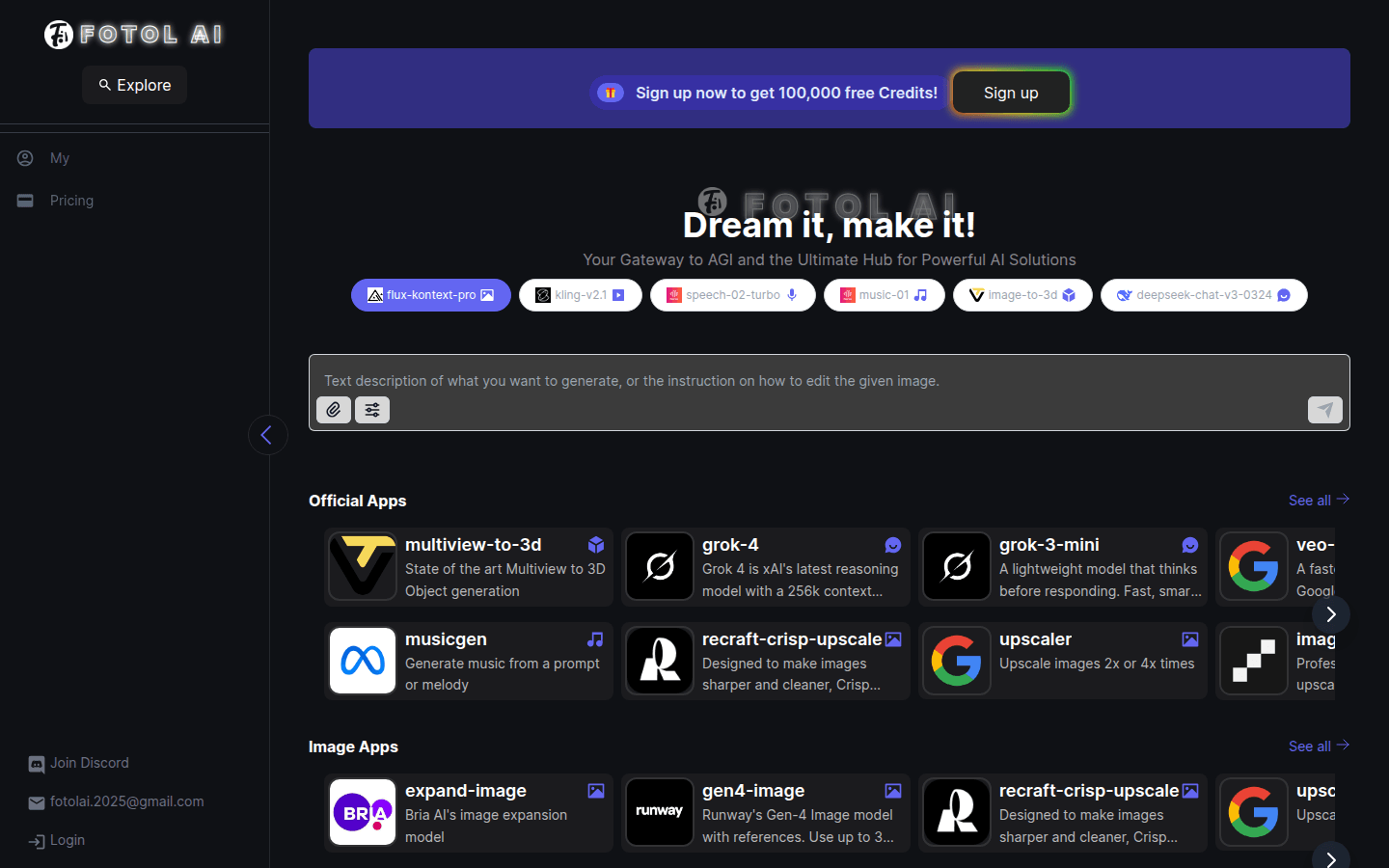

Fotol AI

Fotol AI is a website that provides AGI technology and services, dedicated to providing users with powerful artificial intelligence solutions. Its main advantages include advanced technical support, rich functional modules and wide range of application fields. Fotol AI is positioned to become the first choice platform for users to explore AGI and provide users with flexible and diverse AI solutions.

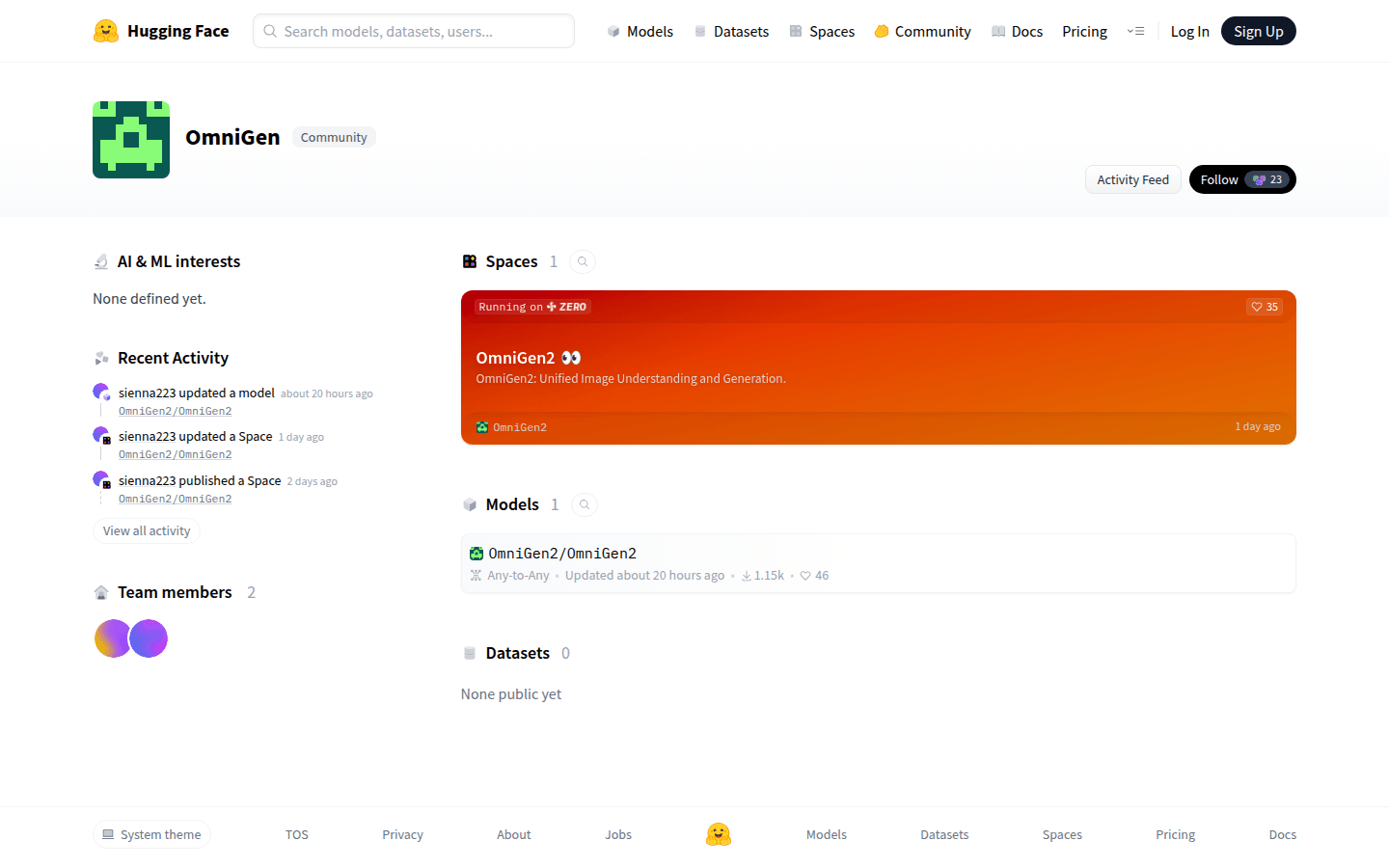

OmniGen2

OmniGen2 is an efficient multi-modal generation model that combines visual language models and diffusion models to achieve functions such as visual understanding, image generation and editing. Its open source nature provides researchers and developers with a strong foundation to explore personalized and controllable generative AI.

Bagel

BAGEL is a scalable unified multimodal model that is revolutionizing the way AI interacts with complex systems. The model has functions such as conversational reasoning, image generation, editing, style transfer, navigation, composition, and thinking. It is pre-trained through deep learning video and network data, providing a foundation for generating high-fidelity, realistic images.

FastVLM

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the encoding time of high-resolution images and the number of output tokens, making the model perform outstandingly in speed and accuracy. The main positioning of FastVLM is to provide developers with powerful visual language processing capabilities, suitable for various application scenarios, especially on mobile devices that require fast response.

F Lite

F Lite is a large-scale diffusion model developed by Freepik and Fal with 10 billion parameters, specially trained on copyright-safe and suitable for work (SFW) content. The model is based on Freepik’s internal dataset of approximately 80 million legal and compliant images, marking the first time a publicly available model has focused on legal and safe content at this scale. Its technical report provides detailed model information and is distributed using the CreativeML Open RAIL-M license. The model is designed to promote openness and usability of artificial intelligence.

Flex.2-preview

Flex.2 is the most flexible text-to-image diffusion model available, with built-in redrawing and universal controls. It is an open source project supported by the community and aims to promote the democratization of artificial intelligence. Flex.2 has 800 million parameters, supports 512 token length inputs, and is compliant with the OSI's Apache 2.0 license. This model can provide powerful support in many creative projects. Users can continuously improve the model through feedback and promote technological progress.

InternVL3

InternVL3 is a multimodal large language model (MLLM) released by OpenGVLab as an open source, with excellent multimodal perception and reasoning capabilities. This model series includes a total of 7 sizes from 1B to 78B, which can process text, pictures, videos and other information at the same time, showing excellent overall performance. InternVL3 performs well in fields such as industrial image analysis and 3D visual perception, and its overall text performance is even better than the Qwen2.5 series. The open source of this model provides strong support for multi-modal application development and helps promote the application of multi-modal technology in more fields.

VisualCloze

VisualCloze is a general image generation framework learned through visual context, aiming to solve the inefficiency of traditional task-specific models under diverse needs. The framework not only supports a variety of internal tasks, but can also generalize to unseen tasks, helping the model understand the task through visual examples. This approach leverages the strong generative priors of advanced image filling models, providing strong support for image generation.

Step-R1-V-Mini

Step-R1-V-Mini is a new multi-modal reasoning model launched by Step Star. It supports image and text input and text output, and has good command compliance and general capabilities. The model has been technically optimized for reasoning performance in multi-modal collaborative scenarios. It adopts multi-modal joint reinforcement learning and a training method that fully utilizes multi-modal synthetic data, effectively improving the model's complex link processing capabilities in image space. Step-R1-V-Mini has performed well in multiple public lists, especially ranking first in the country on the MathVision visual reasoning list, demonstrating its excellent performance in visual reasoning, mathematical logic and coding. The model has been officially launched on the Step AI web page, and an API interface is provided on the Step Star open platform for developers and researchers to experience and use.

HiDream-I1

HiDream-I1 is a new open source image generation base model with 17 billion parameters that can generate high-quality images in seconds. The model is suitable for research and development and has performed well in multiple evaluations. It is efficient and flexible and suitable for a variety of creative design and generation tasks.

EasyControl

EasyControl is a framework that provides efficient and flexible control for Diffusion Transformers, aiming to solve problems such as efficiency bottlenecks and insufficient model adaptability existing in the current DiT ecosystem. Its main advantages include: supporting multiple condition combinations, improving generation flexibility and reasoning efficiency. This product is developed based on the latest research results and is suitable for use in areas such as image generation and style transfer.

RF-DETR

RF-DETR is a transformer-based real-time object detection model designed to provide high accuracy and real-time performance for edge devices. It exceeds 60 AP in the Microsoft COCO benchmark, with competitive performance and fast inference speed, suitable for various real-world application scenarios. RF-DETR is designed to solve object detection problems in the real world and is suitable for industries that require efficient and accurate detection, such as security, autonomous driving, and intelligent monitoring.

Stable Virtual Camera

Stable Virtual Camera is a 1.3B parameter universal diffusion model developed by Stability AI, which is a Transformer image to video model. Its importance lies in providing technical support for New View Synthesis (NVS), which can generate 3D consistent new scene views based on the input view and target camera. The main advantages are the freedom to specify target camera trajectories, the ability to generate samples with large viewing angle changes and temporal smoothness, the ability to maintain high consistency without additional Neural Radiation Field (NeRF) distillation, and the ability to generate high-quality seamless loop videos of up to half a minute. This model is free for research and non-commercial use only, and is positioned to provide innovative image-to-video solutions for researchers and non-commercial creators.

Flat Color - Style

Flat Color - Style is a LoRA model designed specifically for generating flat color style images and videos. It is trained based on the Wan Video model and has unique lineless, low-depth effects, making it suitable for animation, illustrations and video generation. The main advantages of this model are its ability to reduce color bleeding and enhance black expression while delivering high-quality visuals. It is suitable for scenarios that require concise and flat design, such as animation character design, illustration creation and video production. This model is free for users to use and is designed to help creators quickly achieve visual works with a modern and concise style.